There is, at present, no proper way to evaluate an AI coding agent on code the world cannot see. Which is rather a problem, when you think about it, because the codebases that matter most are precisely the ones that are hidden. Behind years of decisions made by engineers who left long ago and took most of the context with them. These are codebases where a single module might depend on configuration inherited from three services, where the naming conventions changed twice in 2021 and nobody updated the documentation, where the tests pass for reasons that are not entirely the reasons everyone assumes. No public benchmark has ever been tested against these. The question of whether an AI agent can actually understand an enterprise codebase remains, for most organisations, entirely unanswered. People simply hope for the best. Which is, one might note, not an engineering methodology.

We know this because we lived it. Every time we brought Potpie into an enterprise, the benchmarks we had scored well on meant nothing. HumanEval, MBPP, all the usual suspects. We scored well. Everyone scores well. Those datasets have been sitting in training corpora for years. Scoring 90% on them proves roughly what it would prove if a student scored full marks on an exam whose answers had been circulating the dormitory all week. The scores look impressive. They indicate almost nothing about what the agent will do when faced with code it has never seen, written in patterns it was never trained on, inside a system whose architecture exists nowhere except in the heads of the people who built it.

The moment you point that same agent at a real codebase, one where services call other services in ways nobody documented, where architectural decisions sit buried in code nobody dares refactor, where a function that looks self-contained is actually load-bearing for three modules above it, the performance collapses. Not dramatically. Quietly. The agent gives answers that sound perfectly sensible and are, on closer examination, wrong. Wrong in the specific way that causes an engineer to trust the output, ship the change, and then spend two days debugging a failure that the agent could not have predicted because it never understood the system around the code it was looking at.

The benchmarks said one thing. The engineers in the room said another.

We stopped trusting the benchmarks and built our own

Potpie QA Evaluation Pipeline

We started with five production-grade open-source repositories. VS Code. Apache Airflow. Mattermost. Supabase. Tinygrad. Not toy projects. Not curated snippets. Real codebases with real mess in them. Codebases with monorepo structures, distributed system patterns, event-driven architectures, complex state machines, and mathematical abstractions that do not respond well to surface-level pattern matching.

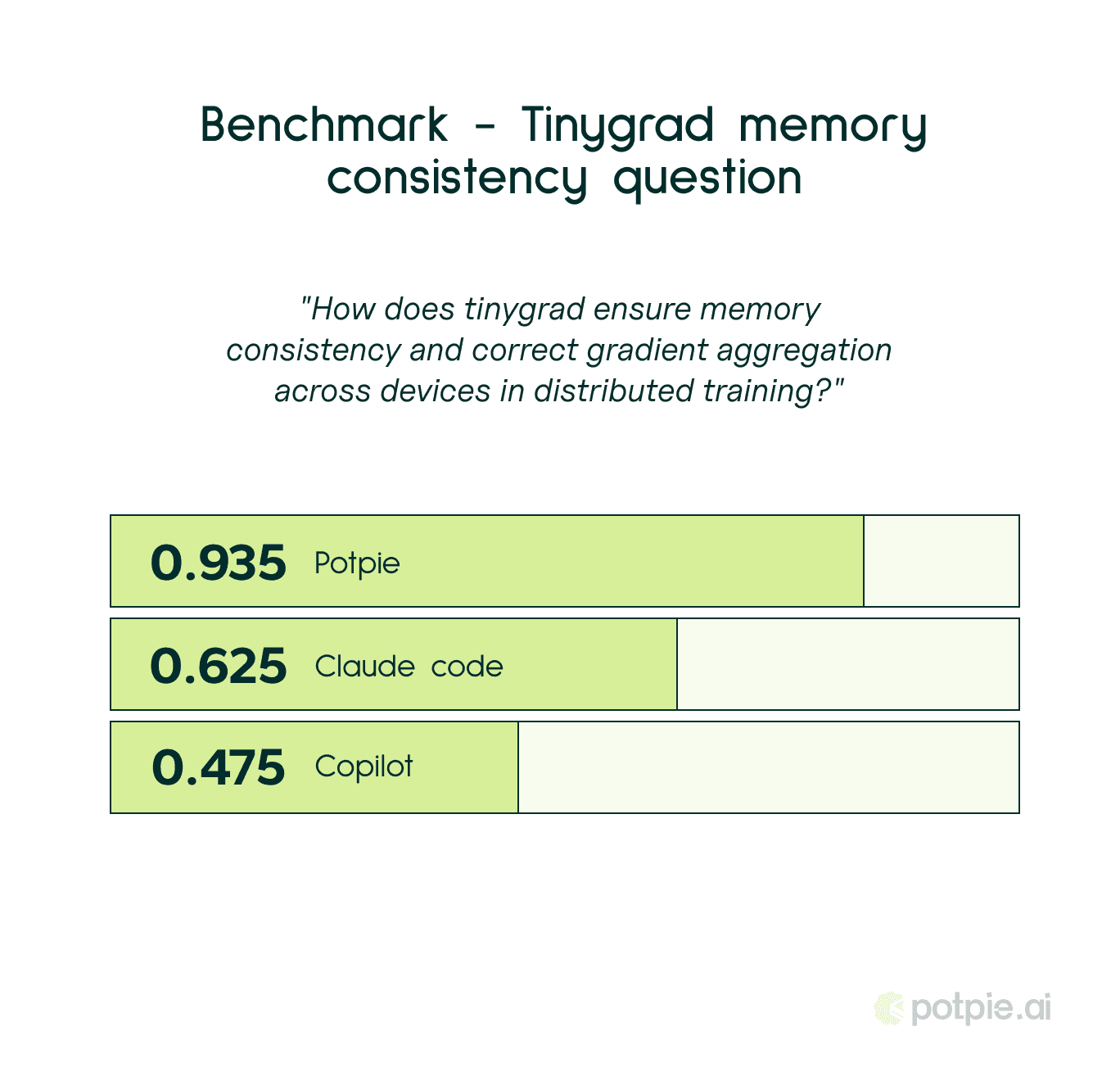

We wrote questions designed to be impossible to answer by reading a single file. "How does tinygrad ensure memory consistency and correct gradient aggregation across devices in distributed training?" You cannot answer that by finding the right function. The answer lives across the architecture. The agent has to trace it through multiple modules, understand how they interact at runtime, and reconcile what the code does with what the system is designed to guarantee. If it cannot do that, it is not understanding the codebase. It is reading files and guessing.

Every reference answer was cross-checked against multiple sources and verified by hand. No generated ground truths. No quiet shortcuts that make the evaluation look harder than it actually is. If a question could plausibly be answered through keyword matching or pattern recognition alone, it was discarded. The entire point was to test what happens when those shortcuts are unavailable.

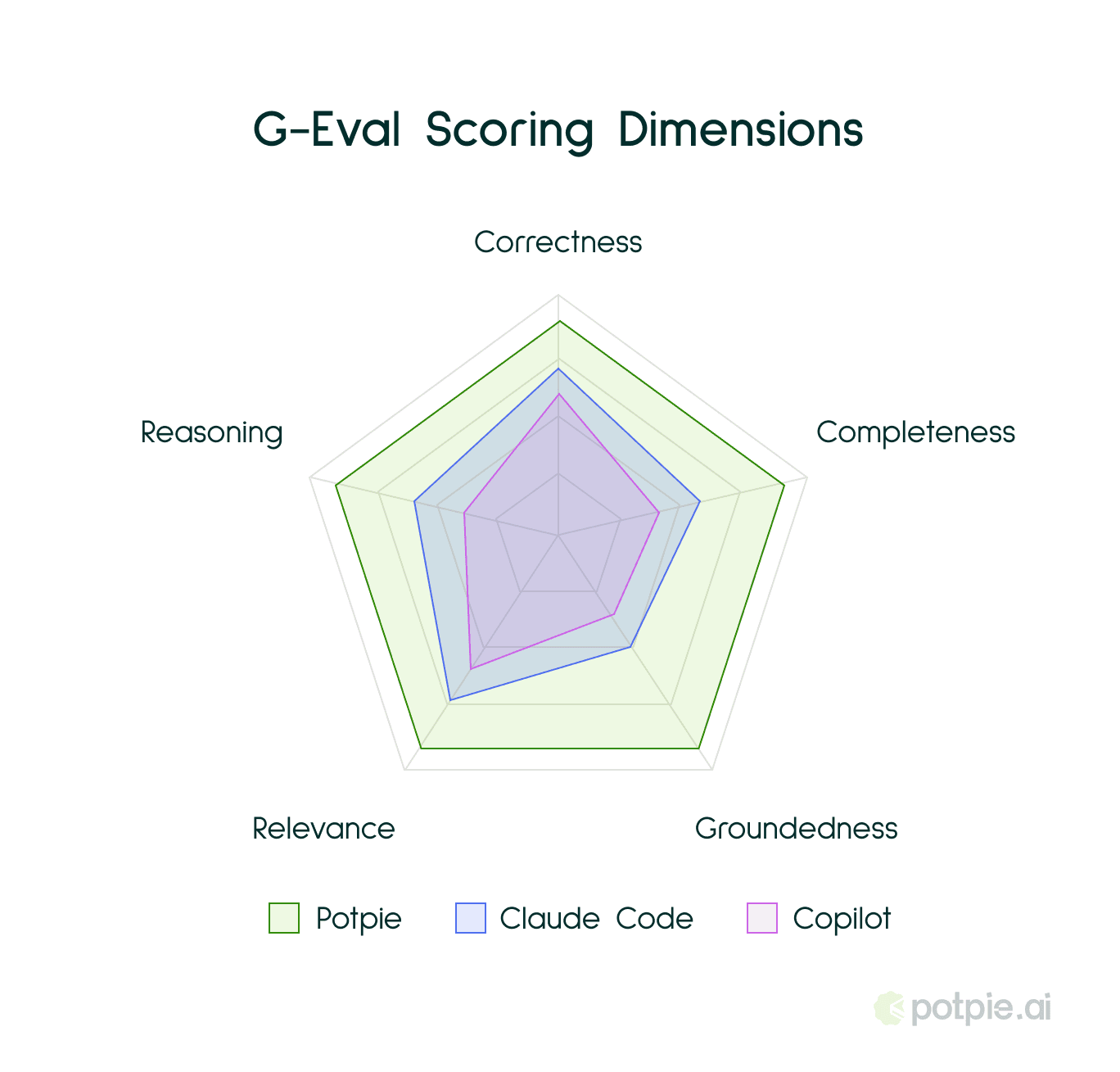

We score using G-Eval, across five dimensions:

Correctness - are the facts right? Are the code references accurate, or is the agent citing functions that do not exist in the way it claims?

Completeness - did it catch the edge cases? Did it mention the alternative approaches, the failure modes, the boundary conditions that a senior engineer would flag in a review?

Groundedness - is it citing real code, or making things up with conviction? This is the dimension that separates an agent that has read the repository from one that is improvising based on what repositories like this usually look like.

Relevance - did it answer what was asked, or what it wished had been asked? Agents frequently pivot to adjacent topics they know more about. This catches that.

Reasoning - can it actually connect concepts that live in different parts of the codebase? Can it trace a data flow across service boundaries and explain why the system behaves the way it does?

The interesting thing about these scores is what they reveal in combination. An agent that scores high on correctness but low on groundedness is answering from memory, not from the code in front of it. It knows what tinygrad probably does because it has seen documentation about it, but it has not actually read the implementation. One that scores high on relevance but low on completeness understood the question perfectly and then gave you half the picture. Each pattern tells you something different. Without this breakdown you are, in effect, staring at a number that means nothing.

Now. What the data actually taught us. Building the dataset was the straightforward part. Running our own agent against it was where things became uncomfortable. Certain patterns were not being recognised at all. Cross-module dependency chains. Deeply nested architectural constraints that only become visible when you traverse the graph far enough. Questions where the answer required tracing through four or five files before it cohered into anything sensible. Our agent was failing on these. Not failing in any obvious way, which would at least have been useful, but producing answers that sounded confident and were subtly, consequentially wrong. The kind of wrong that passes a casual code review. The kind that only surfaces in production, three weeks later, when something downstream breaks and nobody can immediately explain why.

We could see in the scores exactly where groundedness collapsed. Where reasoning simply stopped. Where the agent reached for general knowledge because the real answer required harder work. We traced these failures back to specific weaknesses in context retrieval and graph traversal, places where the agent was not pulling enough of the surrounding code to understand what it was looking at. We fixed them. Then we ran the dataset again. Found new gaps. Fixed those. Ran it again.

This is not a benchmark you pass once and frame on the wall. It is a loop. Generate harder questions. Expose the gaps. Fix the agent. Measure again. That is the work. And it is work that never finishes, because the moment you stop running your agent against data designed to break it, you stop knowing where it is weak.

The results, after that process:

On the tinygrad memory consistency question -

Potpie: 𝟬.𝟵𝟯𝟱

Claude: 𝟬.𝟲𝟮𝟱

Copilot: 𝟬.𝟰𝟳𝟱

Those numbers did not come from nowhere. They are the result of months of confronting our own agent's failures against data specifically designed to find them. The gap is not because we used a bigger model or a cleverer prompt. It is because we knew exactly where the agent was breaking and we fixed it, iteratively, until it stopped.

We are releasing a small, representative sample of this dataset publicly. The full dataset is considerably larger and continues to grow as we add new repositories and harder questions. But this portion is enough for any team to hold their own agents to the same standard we hold ours. Enough to see whether an agent is actually reasoning about code or whether it is simply performing fluency on topics it has seen before. Because the industry does not need more benchmarks that make everyone feel clever. It needs benchmarks that tell the truth.

Dataset: huggingface.co/datasets/potpieai/pot-qa25

Methodology: potpie.ai/evals

Recommended Blogs