The quality of an AI harness is determined almost entirely by the quality of context it has access to. Without the right context, even the most sophisticated model will produce answers that are technically correct but practically useless. With the right context, even simpler models can give you something genuinely actionable.

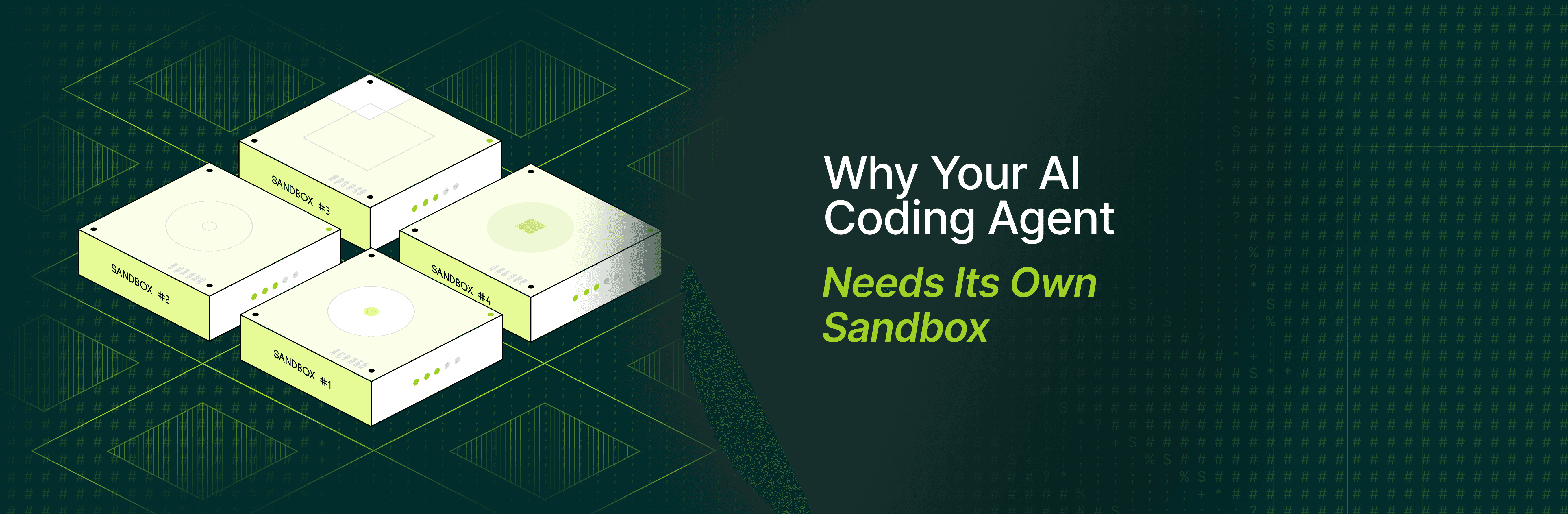

Most AI coding tools sell on the same promise: connect your repository and start immediately. For smaller projects with straightforward architectures, this actually works. The first 20% of context gets you 80% of the value. Sometimes, a quick repo connection and surface-level parsing is genuinely enough to get useful answers. But as codebase complexity grows with more services, legacy code, undocumented dependencies, this approach plateaus quickly. The gap between "surface context" and "deep context" widens, and what worked for a startup becomes a liability for an enterprise.

This is where the sales pitch starts to diverge from reality. Walk a standard AI tool demo into a real enterprise engineering org and something different happens. The outputs feel generic. The agent misses context that's obvious to anyone on the team. The performance difference for smaller, medium complexity repos and enterprise repos become really evident. This gap is more subtle and more common than people admit. Enterprise environments have implicit constraints, undocumented conventions, and layers of operational history that don't surface in obvious errors. Instead, you get silent failures where the agent produces answers that look right but miss critical context, or suggests solutions that ignore how your team actually works. The tool works fine, it's just working on incomplete information, and the cost shows up slowly in accumulated friction and declining trust.

This gap between demo and reality doesn't get talked about enough, and it leads to frustration, wasted investment, and tools being thrown out before they've really been given a chance. Understanding what it actually takes to make an AI tool useful in a complex environment changes how you evaluate options. So here's exactly how Potpie onboards every team, step by step, to make sure their agent coding workflow is contextual and consistent from day zero, not six months in after a lot of trial and error.

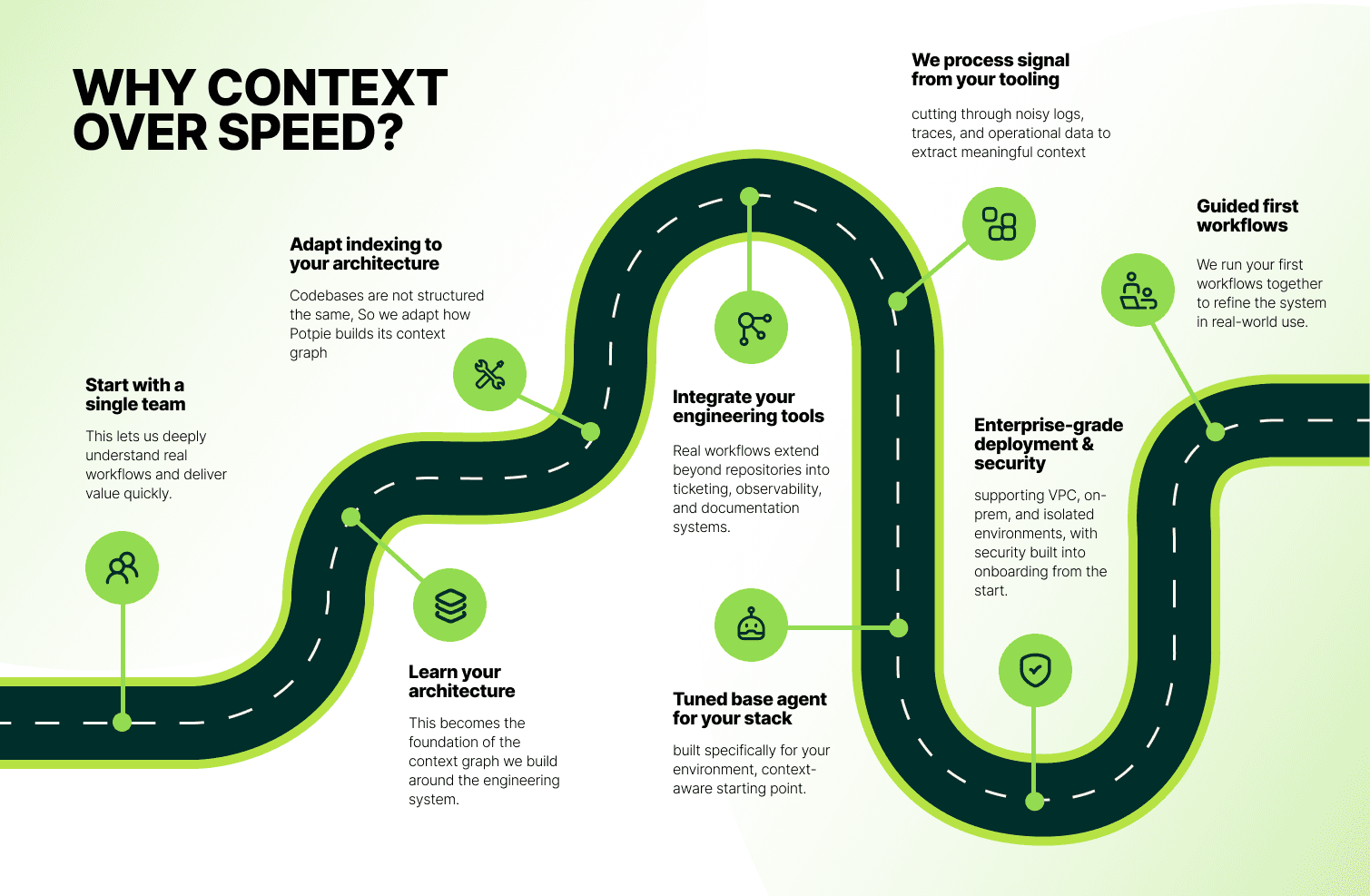

1. Start with one team

Not one department. One team. One specific workflow.

Rolling out across an entire organization on day one sounds very lucrative. But in practice, it spreads the engineering resources so thin that nothing works well for anyone. You end up with people who have tried it, everyone shrugging, and no clear signal about what needs fixing. Working with one team allows you navigating through the depth of the problem and identifying what works right for this particular stack.

2. Spend real time mapping the architecture

Enterprise codebases are messy and carry years of decisions made by people who have since left the company, using languages and frameworks chosen for reasons nobody fully remembers. Services call other services in ways that aren't documented anywhere except in someone's head. Before Potpie can work with any of this, we need to understand how it's actually wired. Not how it's supposed to be wired. Not what the architecture diagram from 2019 says. How it works today. That mapping becomes the foundation of the context we build. Without it, every answer the agent gives is just a guess. Customizing how that context gets collected, prioritizing the right services, understanding internal naming conventions, mapping actual call patterns rather than declared dependencies makes the difference between an agent that sounds right and one that actually helps.

3. Customize how context gets indexed

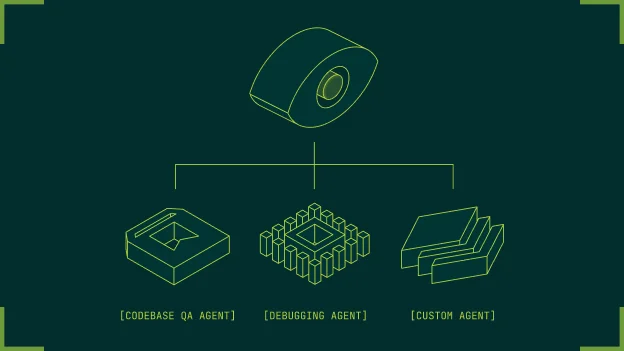

Generic indexing and tool strategies assume standard project layouts. That assumption fails for monorepos with 40+ services, micro-frontends, or domain layers that don't follow convention. We configure how the agent traverses your codebase: which files to prioritize, how to weight cross-service dependencies, which naming conventions matter, and how to resolve references that don't match the directory structure.

4. Bring in the full toolchain

The repository is not the whole story. Not even close. Real engineering work touches Jira, Datadog, Confluence, internal deployment systems, and custom tools your team has built up over the years. An agent that only sees the code is missing the context that makes any of it make sense. When we pull in the toolchain, the answers start sounding less generic and more like a senior engineer who knows the full picture.

5. Pull signals from your operational data

Logs, traces, alerts, and incident notes contain the real history of your system. They're also unstructured, noisy, and stored across multiple systems.

Most agents skip this entirely because ingestion is non-trivial. We clean, parse, and index operational data so the agent can reference it during incidents, debugging sessions, and postmortems. This evolves the tool from just writing code to debugging issues in production.

6. Tune retrieval for your specific stack

Different tech stacks need different context strategies. A Spring Boot monolith and a Kubernetes-based micro services architecture don't benefit from the same retrieval patterns. We configure which context sources the agent queries, how it weights recency versus relevance, and which tools it has access to for each type of question. This upfront work means engineers don't spend time adding extra information on top of every prompt compared to a non-context aware harness.

7. Build security constraints from day one

Enterprise deployment has real blockers: air-gapped networks, SOC 2 requirements, data residency rules, strict RBAC. Tools that treat these as afterthoughts never make it past the security review. We map your compliance requirements even before integration starts. This prevents any legal limbo during deployment and integration.

8. We train your engineers on how to use it

Most engineers haven't worked with context-aware agents before. Without guidance, they treat it like ChatGPT, ask generic questions, get generic answers, and stop using it.

We show them how the context layer works, what information the agent has access to, and how to structure questions that leverage that context. Once they understand the model, they start using it in ways that weren't obvious from the start.

Once they understand how the agent thinks, they start asking better questions. They give it more context and the right context. As the trust builds up, engineers start to experiment with new workflows for their development, and that is where you start to see productivity gains.

Single-click onboarding assumes the environment is already simple. Enterprise environments almost never are.

This changes how you evaluate AI tools. Not "can I connect it in five minutes?" but "what does it take to make this useful in our system?"

That's the question we're built around. More upfront effort. The only approach that works when the environment is real.

And here is how we actually onboarded our first customer.

Our first customer was a SaaS company that wanted to automatically generate and maintain integration tests for every pull request. This wasn't a plug-and-play onboarding. Over 18 days, we worked closely with their team to deeply integrate into their engineering workflow. We parsed their entire codebase into a knowledge graph, connected context across their tools, and set up a structured spec layer so agents could operate with consistency. Instead of jumping to full rollout, we started with a focused pilot across five engineers, iterated based on real usage, and only then expanded across the team.

What became clear during this process was that onboarding doesn't end at deployment, it ends at adoption. The final four days were spent purely on training and driving usage, ensuring engineers actually integrated the system into their day-to-day work. Because in practice, it doesn't matter if a tool is technically integrated, what matters is how many engineers actively use it. That is the metric that determines whether a product becomes embedded in the workflow or quietly gets sidelined.

As adoption grew, the scope of usage expanded beyond the initial testing use case. What started as automated integration test generation gradually extended into multiple parts of the software development lifecycle, from debugging and RCA to design and implementation workflows. By focusing on real usage and not just deployment, this engagement evolved from a narrow pilot into a broader, long-term partnership, with the system becoming a consistent layer across the team's engineering processes.

Recommended Blogs