Continuing from our last conversation from here, we established that compliance cannot be an after thought, rather than it should be engraved in the architecture.

An AI agent with raw access to the entire codebase can still fumble when making precise changes because it lacks the surrounding “why” behind design decisions and existing constraints, and will often fill those gaps with its own interpretation rather than the system’s actual intent.

The problem is they also have no idea what confidential means, no instinct for why the payment gateway secrets are different from the README, and absolutely no hesitation about pulling both into the same answer. That's not a hypothetical risk.

This is being used in the standard RAG implementation of production today. Here's the thing I've come to believe after reading research and implementations around this, the answer isn't to give the AI less. The answer is to give it better-structured information. Security and utility aren't on opposite ends of the same dial. If you treat them that way, you'll always lose one trying to protect the other.

The Flat Data Trap: Why Standard Retrieval Fails

A lot of AI tools start from the same basic recipe: slice your code into small chunks, embed those chunks into a vector database, and when you ask a question, pull back whatever looks most similar and feed it to the model. Newer coding assistants have made this work much better with smarter chunking and ranking, but under the hood it’s still that same pattern.

But even when the retrieval quality is excellent, the underlying mechanism is still driven by semantic similarity, not by a deep understanding of your security posture or clearance model. The vector index doesn’t inherently know which parts of the codebase are PCI‑scoped, tenant‑isolated, or admin‑only, it just knows what looks relevant.

The semantic leak in practice

Imagine a developer asks the AI:

“How do we handle user sessions?”

In a flat RAG setup:

The index contains a chunk from

docs/README.mddescribing your general session model.It also contains a chunk from

src/auth/session_manager.pythat implements your proprietary handshake and production secrets.

Because both chunks are semantically similar to “user sessions,” the agent happily pulls both into its context window. It then tries to be maximally helpful and may start explaining details of your session validation, token rotation strategy, or internal security checks that the requester should never see.

It’s not a bug, it’s the only way the architecture was designed. Retrieval by similarity has no concept of clearance level.

As teams have started putting RAG into production, they’ve already hit this wall. Elastic, for example, shows how a naive HR chatbot can leak compensation data until you wire RBAC into the retrieval step. So, the risk is not theoretical anymore; rather , it's a direct consequence of treating everything as a flat pile of semantically searchable text.

A BetterAbstraction: The Context Graph

What if instead of a pile of text, your codebase was represented as a map?

Not a visual map. A structured one, a graph of symbols, relationships, and governance rules all living together: Functions, classes, files, and services become nodes. Calls, imports, ownership, and dependencies become edges. Permissions, team ownership, and regulatory obligations attach as attributes on those nodes and edges.

This isn't a new idea, by the way. In the security world, Code Property Graphs (CPGs) have used this pattern for years, tools like Joern represent code as a unified graph merging syntax, control flow, and data flow so you can query large codebases structurally and trace sensitive data paths.

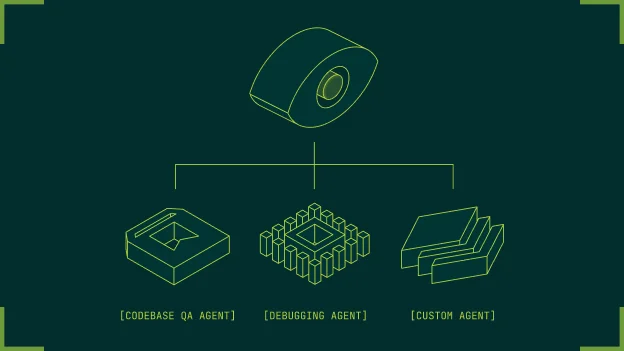

The Context Graph brings all of these traditions together. It has two symbiotic layers.

The first is the Knowledge Layer, the grounded graph of code symbols, logic flows, and dependencies. The agent is traversing a precise map of how your software actually behaves.

The second is the Context Layer, the governance layer. Organizational structure, roles, teams, pr discussions, slack threads, comments etc. Every traversal the agent makes is constrained by relevance, structure and policy.

Semantic Sandboxing: RBAC at the Atomic Level

Once your codebase is represented as a graph, you can enforce access control at a much finer granularity than “index A vs index B.” Semantic Sandboxing is the practice of applying RBAC concepts directly to the graph itself, instead of only to whole indices or documents.

Instead of granting a user all‑or‑nothing access to an index, you:

Attach access-control metadata to specific nodes.

Attach constraints to edges.

Evaluate permissions at query time as the AI walks the graph.

In other words, retrieval becomes RBAC‑aware by construction: the agent can only traverse parts of the graph that the caller’s role is authorized to see.

This is also where the broader RAG ecosystem is moving. Elastic, for instance, shows how to combine RAG with RBAC so retrieval respects index‑, document‑, and field‑level permissions; a manager can see salary data that an engineer cannot, even through the same chatbot. Other vendors integrate RAG with centralized authorization systems to enforce document‑level security at query time for enterprise support scenarios. Our approach pushes the same idea all the way down to the level of individual symbols and dependencies in your code graph.

What does this look like in practice?

A developer asks: "How do we handle payment authorization failures?"

In a flat RAG setup, they might see low-level details about your payment gateway integration, retry logic with vendor-specific decline codes, maybe hints about fraud detection thresholds or risk scoring. None of that is information they should have.

With Semantic Sandboxing, the sensitive nodes, encryption logic, secrets management, gateway internals that are not part of the traversable subgraph for that role. The agent's working context literally does not contain those symbols. It builds its answer from the non-sensitive part of the graph: high-level flows, public API contracts, team runbooks.

And here's the important part: the agent isn't "keeping a secret." It isn't being instructed to withhold something it can see. For that session, the secret simply does not exist as reachable context. That's a fundamentally different and far more trustworthy architecture.

Pruning the “Poison” Before Ingestion

If you are serious about compliance, you cannot allow sensitive data (API keys, hardcoded credentials, PII, PHI, internal IPs) to ever enter long-term AI memory in the first place.

The Context Graph gives you clean interception points before your code is even indexed.

Pre-ingestion scrub pipeline

Symbolic analysis

Static analysis and parsing distinguish code from data:Function signatures, ASTs, and control/data flow are retained.

Literals that look like secrets are flagged for exclusion or redaction.

This mirrors how CPG-based tools track flows from sources to sinks to find vulnerabilities and sensitive paths.

Logic extraction

You index intent and contract:“This function validates a JWT and enforces a 30‑minute session timeout.”

“This method writes an audit log entry for every administrative permission change.”

The AI can reason over behavior and guarantees for example, “all admin operations are logged” without ever seeing the actual signing keys, algorithm parameters, or internal IP ranges.

Sanitization and policy tagging

Before anything is committed to the graph:Secrets and high-risk literals are dropped or replaced with structured placeholders.

Nodes that touch regulated data (e.g., personal data under GDPR) are tagged with classification labels so later queries can be constrained according to data minimization and regional rules.

The data that the AI receives is a cleaned, high-fidelity representation of your logic and intent, not a raw dump of your vulnerabilities.

Turning Compliance into a Glass Box

I want to be honest about the biggest actual blocker to AI adoption in regulated industries, because I don't think it's talked about enough. It's not just leak risk. It's the black box problem.

If an AI suggests a code change, or generates a remediation plan, or helps make an access decision, you need to be able to answer three questions:

Why did it do that?

Which inputs did it rely on?

Can we prove to an auditor that this behavior is consistent with our policies?

With a Context Graph, you get deterministic traceability. Every answer can be traced back to the exact nodes and edges traversed. You know which symbols were read, which policies were evaluated, which branches were pruned because of clearance restrictions. You can replay that traversal. You can export it as evidence.

That matters for real compliance frameworks:

ISO 42001 expects transparent, governed AI behavior and risk documentation. A traversal log linking outputs back to specific code nodes and policies fits directly into that.

GDPR's data minimization principle requires that personal data be adequate, relevant, and limited to what's necessary. Policy-aware graph retrieval ensures only the nodes needed for a given task and role are ever exposed.

SOC 2 demands evidence that access controls operate effectively over time. Logging not just what was asked but which parts of the graph were traversed and which sensitive regions were blocked gives you audit-ready evidence that yourAI actually respects your controls.

Instead of “agent did something, trust us,” you get a glass-box story: this agent, with this role, walked these nodes, under these policies, at this time.

What Implementation Actually Looks Like

I'll spare you the marketing version and give you the mechanics.

Ingestion and parsing- repositories parsed into abstract syntax trees, symbols extracted, call and dependency graphs built, metadata attached: repo, branch, service, owner team, test coverage.

Governance overlay- syncing identities from your IdP (Okta, Azure AD, whatever you use), mapping services and code paths to data classifications (public, internal, confidential, PII, PCI, tenant-scoped), tagging nodes and edges with applicable regulatory scope.

Policy engine at query time- every query evaluated before graph traversal begins. Edges that would cross into disallowed scopes simply aren't followed. The LLM only ever sees a projection of the graph that's already policy-safe for that identity and purpose.

Explainability infrastructure- traversal paths, policy decisions, and graph versions stored as structured logs, surfaced to security teams and compliance officers as part of yourAI control set.

The era of blind AI is ending, whether we choose it or not. Regulated industries are starting to ask hard questions. Compliance teams are getting involved in AI deployments they previously ignored. And the early builders who treated governance as an afterthought are going to have a very uncomfortable couple of years.

The good news, the genuinely good news, is that you don't have to choose between a capable AI and a safe one. Structure, hierarchy, and semantic rules make your codebase more useful to an AI and less dangerous in its hands.

Compliance isn't a layer you add on top of the product once you've shipped it. It's the architecture. Get the architecture right, and the rest becomes much, much easier.

Recommended Blogs