In enterprise-grade applications, compliance doesn't only act as a regulatory checkbox but as a security layer, a deliberate architectural choice that prevents vulnerabilities from exposing sensitive information. When we at Potpie built SOC 2, GDPR, and ISO compliance programs, we realized they were not overhead to tolerate but essential safeguards that protect both your systems and your customers' data.

The AI era has made this urgency visceral. LLMs and AI agents ingest staggering volumes of data customer information, training datasets, inference logs, model parameters. A chatbot that automates customer service seamlessly also processes personally identifiable information at scale. An AI agent that simplifies workflow automation handles operational secrets and business-critical data. The convenience of automation and the risk of data exposure have become inseparable.

This is where compliance transforms from mere documentations to involve the guidelines in product development. You can't separate AI governance from security. Every control you implement simultaneously serves two purposes: regulatory compliance and data protection.

If you've already achieved SOC 2 Type II and GDPR compliance, you have a strong foundation. But AI changes the equation fundamentally. You need ISO 42001 for AI-specific governance, EU AI Act compliance for legal protection, and integration across all frameworks to close gaps and eliminate redundancy.

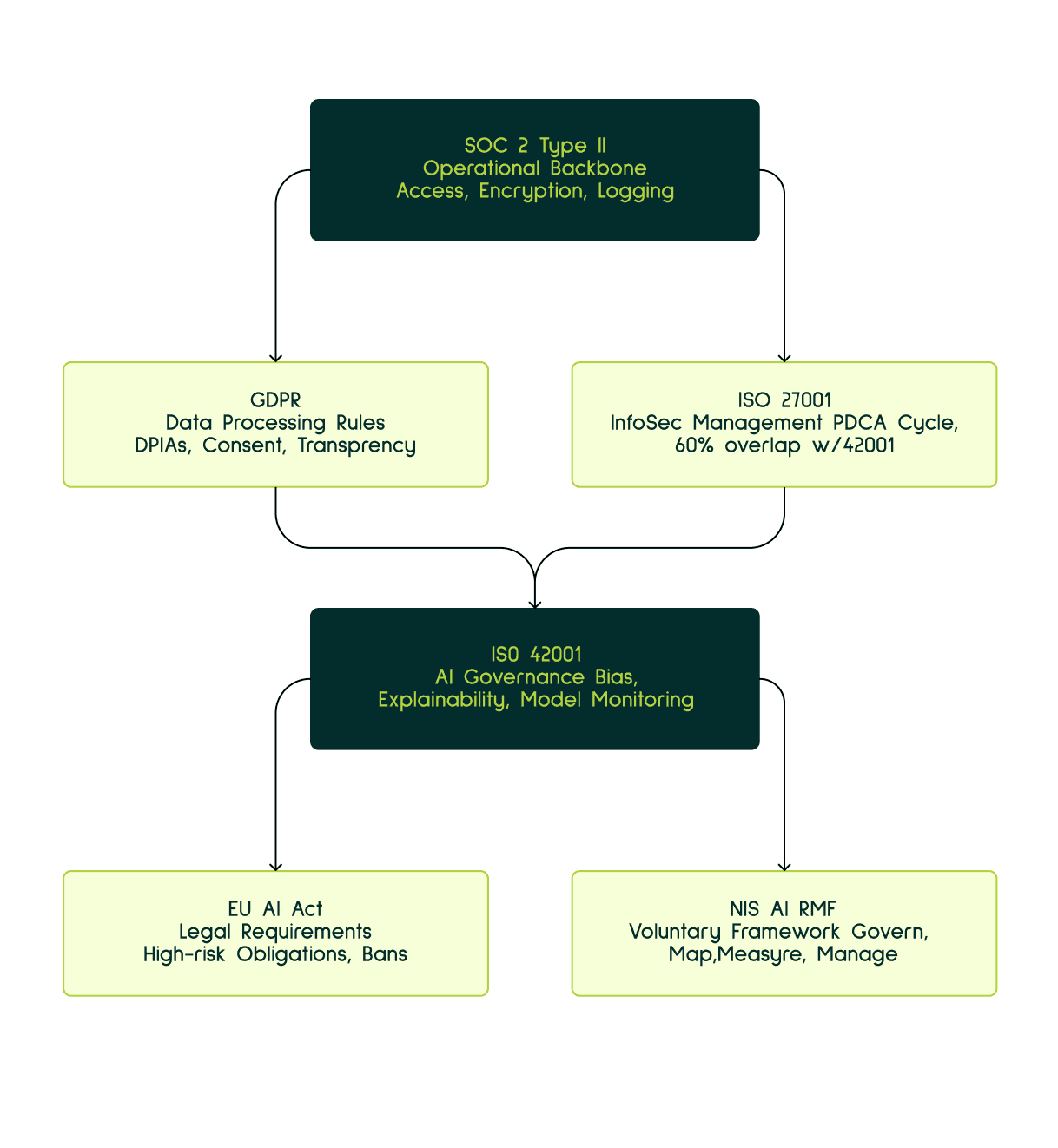

The Compliance stack

Think of compliance for AI systems as stacked layers, not isolated checkboxes.

At the base, SOC 2 Type II is about proving that your day-to-day operations are secure and consistent over time. It covers access controls, encryption, logging, monitoring, and incident response and most importantly, it gives you a continuous evidence pipeline. This is the operational backbone for almost every other compliance effort. What it doesn’t cover are AI-specific risks like biases, or explainability, which is why SOC 2 alone is never sufficient for AI companies.

That gap is where ISO 42001 comes in.

Before getting there, though, there’s GDPR, which is non-negotiable if you touch EU personal data. GDPR governs how and why data is collected and used over things like lawful basis, consent, DPIAs, transparency etc. For AI systems, this extends further impact assessments before training on personal data, safeguards against discriminatory outcomes, and explainability when automated decisions affect individuals. GDPR sets the legal boundary conditions within which your AI must operate.

Now zooming out to security management, ISO 27001 provides the broader information security management system. It uses the standard ISO structure of 10 clauses and a Plan-Do-Check-Act cycle and overlaps heavily with ISO 42001 (roughly 60%). If you already have ISO 27001, you’re not starting from scratch, many of the already implemented mechanisms can be reused and extended.

ISO 42001 builds directly on that foundation but focuses entirely on AI. It formalizes how you assess AI risks, evaluate impacts on fundamental rights, test for bias and fairness, implement explainability, govern training data, and monitor models continuously in production. It’s essentially the operationalization of AI ethics, designed to slot neatly into an ISO 27001 environment.

On the regulatory side, the EU AI Act turns many of these ideas into legal requirements. Enforcement started in February 2025. Certain AI practices are outright banned, while high-risk systems must meet strict obligations. The penalties are pretty high. If you’re building or deploying AI in the EU, this is not optional.

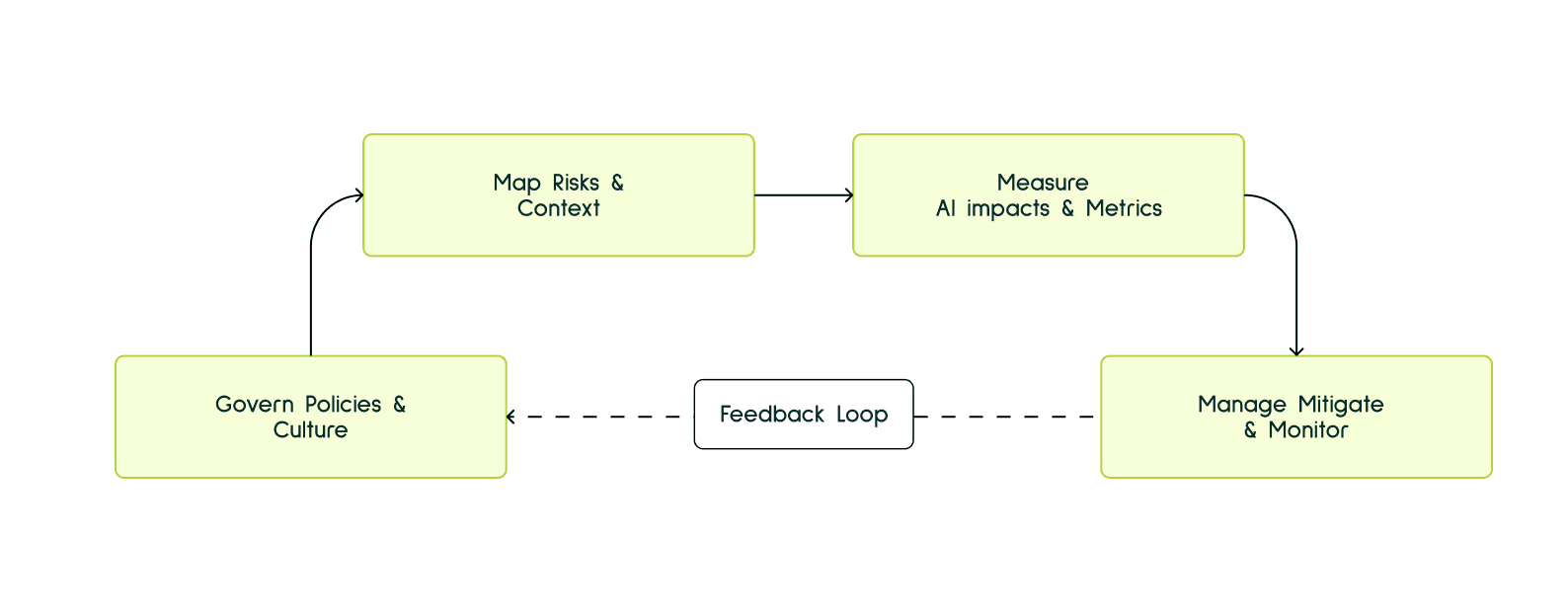

Finally, there’s the NIST AI Risk Management Framework, which is voluntary but extremely practical. It doesn’t prescribe controls instead, it gives you a way to think and act. It basically lays down four main functions, Govern, Map, Measure, Manage. In practice, NIST AI RMF pairs well with ISO 42001. NIST helps you in how to think about AI systems while ISO helps you in systemizing it.

Framework / Regulation | What it Primarily Does | Why It Matters for AI |

|---|---|---|

SOC 2 Type II | Proves your operational controls work consistently over time | Establishes trust through evidence of secure access, monitoring, and incident response |

GDPR | Defines what’s legally allowed when processing personal data | Sets strict rules for AI training, automated decisions, transparency, and individual rights |

ISO 27001 | Secures the organization via an information security management system | Provides the security foundation that AI systems rely on |

ISO 42001 | Governs AI behavior and lifecycle management | Addresses AI-specific risks like bias, fairness, explainability, and model monitoring |

EU AI Act | Enforces binding legal requirements for AI systems | Introduces mandatory controls and heavy penalties for non-compliance |

NIST AI RMF | Offers a pragmatic, voluntary risk management framework | Helps teams identify, measure, and manage AI risks in practice |

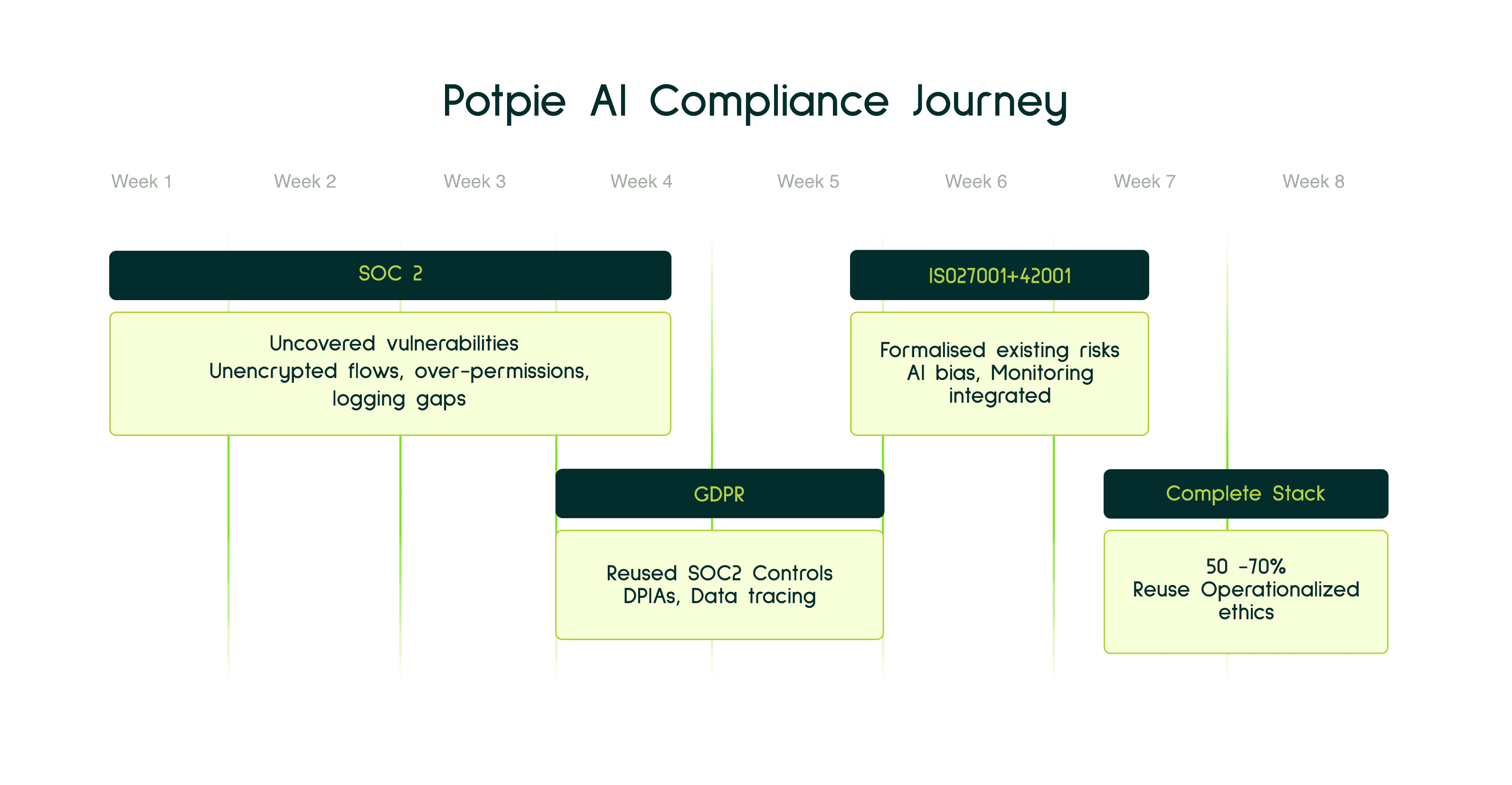

Case Study: Potpie AI’s Compliance Journey

Our experience at Potpie AI shows that compliance frameworks don’t have to be built in isolation or over years. When approached sequentially and intentionally, they reinforce each other.

The full journey SOC 2, GDPR, ISO 27001, and ISO 42001 took six to eight weeks end to end. But the real value wasn’t how fast it happened. It was what surfaced along the way.

Month 1: SOC 2 Type II (4 weeks)

Starting with SOC 2 forced a deep, uncomfortable look at the company’s operational reality. The rigorous examination of infrastructure at the operational level including integrations like GCP, GitHub etc. showed the real vulnerabilities.

That effort uncovered real issues hiding in plain sight. Below are the few mentioned ones,

Unencrypted data flows in specific integration paths

Service accounts with far broader permissions than necessary

Logging blind spots that no automated scanner had ever flagged

SOC 2 made the system more secure from the ground up by exposing where security was missing. A lot of implementation changes took place gradually rolling to production in the parts where security weaknesses were identified before turning them into potential breaches in future.

GDPR (2 weeks)

With SOC 2 controls already in place, GDPR implementation took just 15 days, the reason being core security controls like encryption, access controls, audit logs, and incident response core GDPR expectations were already operating in production.

Since, the actual effort of writing most of the policies were covered in soc2, the focus shifted to tracing where personal data moved through the system, confirming lawful basis and consent, and documenting those decisions through DPIAs. SOC 2’s continuous evidence collection made this process straightforward.

ISO 27001 and ISO 42001 (1 week)

Both ISO certifications were completed in one week, largely because they share the same management system structure.

By this point, the controls already existed. ISO work became a matter of formalizing what was already happening risk registers, control ownership, reviews, and continuous improvement.

ISO 42001 extended that thinking directly into AI model risk, bias considerations, explainability, and ongoing monitoring without requiring a separate compliance universe.

Key Learning

If SOC 2 is implemented properly, 50–70% of the architecture needed for GDPR, ISO 27001, and ISO 42001 is already in place.

These frameworks overlap because they’re solving the same core problems:

Protect sensitive data

Control access

Detect and respond to incidents

Prove, continuously, that safeguards are working

A critical part of completing the compliance stack is doing SOC 2 properly. When SOC 2 is implemented well, it naturally puts the rest of the frameworks on a smooth path. At that point, most of the heavy lifting is already done, you're largely just identifying the remaining policy gaps and filling them in, rather than rebuilding controls from scratch.

The Strategic Shift: Compliance as Architecture

The companies that will lead in the AI era won’t be the ones that see compliance as regulatory drag. They’ll be the ones that treat it as architecture, something designed directly into how products are built, data is handled, models are evaluated, and systems are deployed.

Implementing ISO 42001 alongside SOC 2 and GDPR isn’t about adding layers of bureaucracy. It’s a commitment to knowing what data your models are trained on, testing for bias before systems affect real people, and continuously monitoring behavior once models are in production. It also means responding quickly when things go wrong and owning the outcome.

That level of rigor does more than satisfy auditors. It protects users. It protects the business. And it creates real differentiation in a market crowded with AI products that move fast but can’t always be trusted.

Key Takeaways

Compliance is security architecture, not overhead

When built correctly, it prevents vulnerabilities instead of reacting to them. Treat it as part of product design, not an afterthought.Your SOC 2 and GDPR work is reusable capital

Together, they cover roughly 40–60% of what ISO 42001 requires. Use them as a foundation, not sunk cost.Frameworks layer—they don’t duplicate

SOC 2 secures operations, GDPR governs data use, ISO 42001 governs AI behavior, and the EU AI Act defines the legal boundaries. Integration is the advantage.Data lineage and transparency are non-negotiable

You must know where data comes from, how it’s used, what models are trained on it, and who can access it.Bias testing is mandatory, not optional

Regulators expect it. Customers demand it. Build it into model evaluation before deployment not after incidents.Third-party AI is still your responsibility

Using vendor models doesn’t transfer accountability. You own the risk and must audit and govern accordingly.

The idea is to bake compliance into the architecture, not sprinkle it on afterward. That way, it acts like a cozy security blanket over your already “manually” tested code quietly reassuring everyone that things won’t catch fire in production.

Recommended Blogs