Potpie'S METRICS

Benchmarking AI Coding Tools in Production Environments

Tested against real-world queries across five large open-source codebases, Potpie sets a new benchmark in AI-driven code analysis.

Overview

We evaluate multiple AI code understanding agents across a set of curated questions drawn from real-world repositories to highlight practical differences in how they retrieve context, reason across files, and explain code behavior. The goal is to surface how well each agent handles tasks that resemble everyday developer queries.

Each agent is evaluated using default configurations with no additional tuning or repository-specific customization. We measure answer accuracy, reasoning quality, context retrieval, and consistency across queries to reflect how these systems perform in realistic development workflows.

Methodology

For QA benchmark, we selected repositories that represented real-world software complexity and workflows. The chosen repos are very popular open source projects with active development history, meaningful dependency structures, and diverse code patterns so agents must reason across files, modules, and context.

Potpie'S METRICS

How We

Evaluate Responses

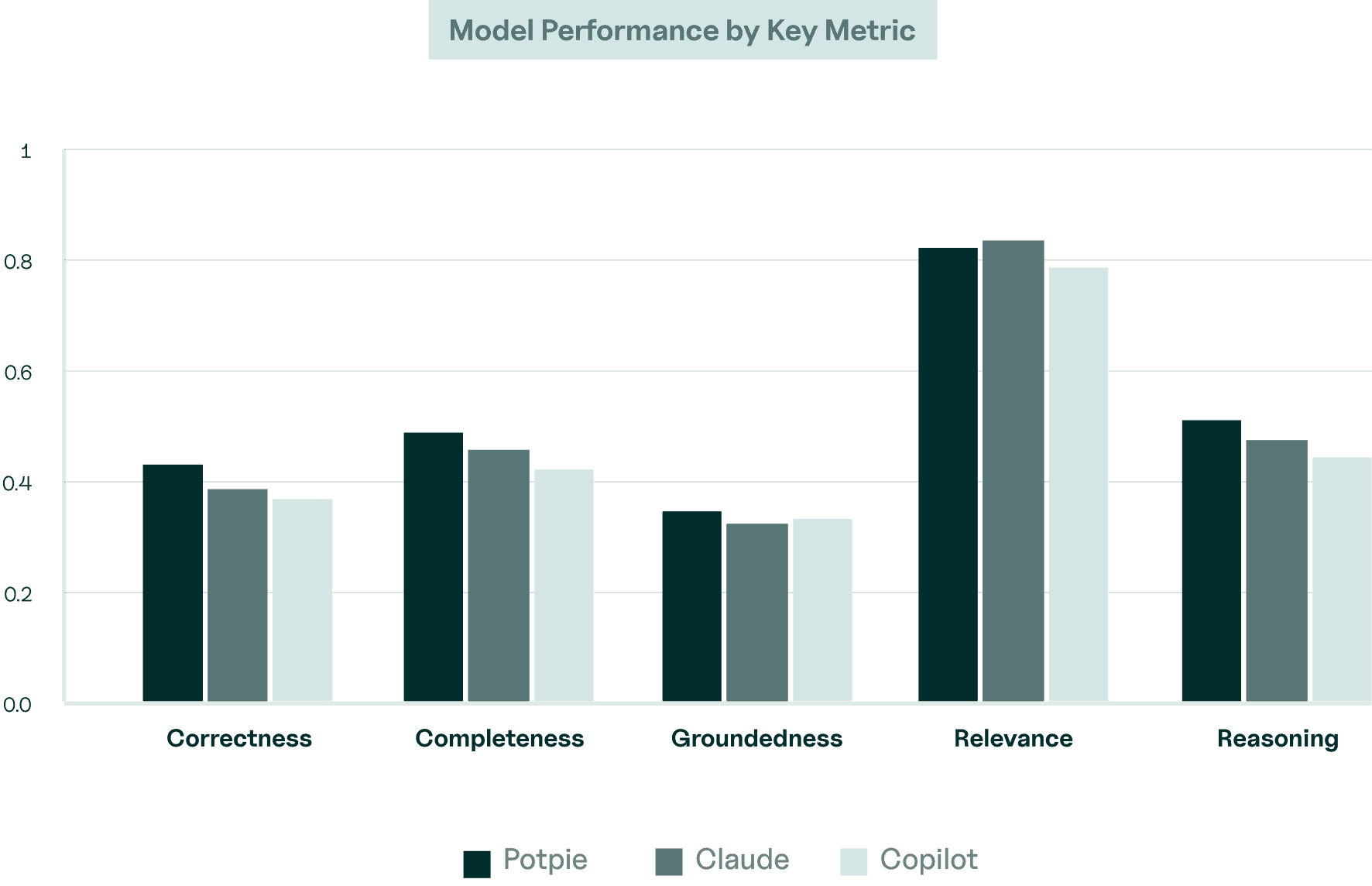

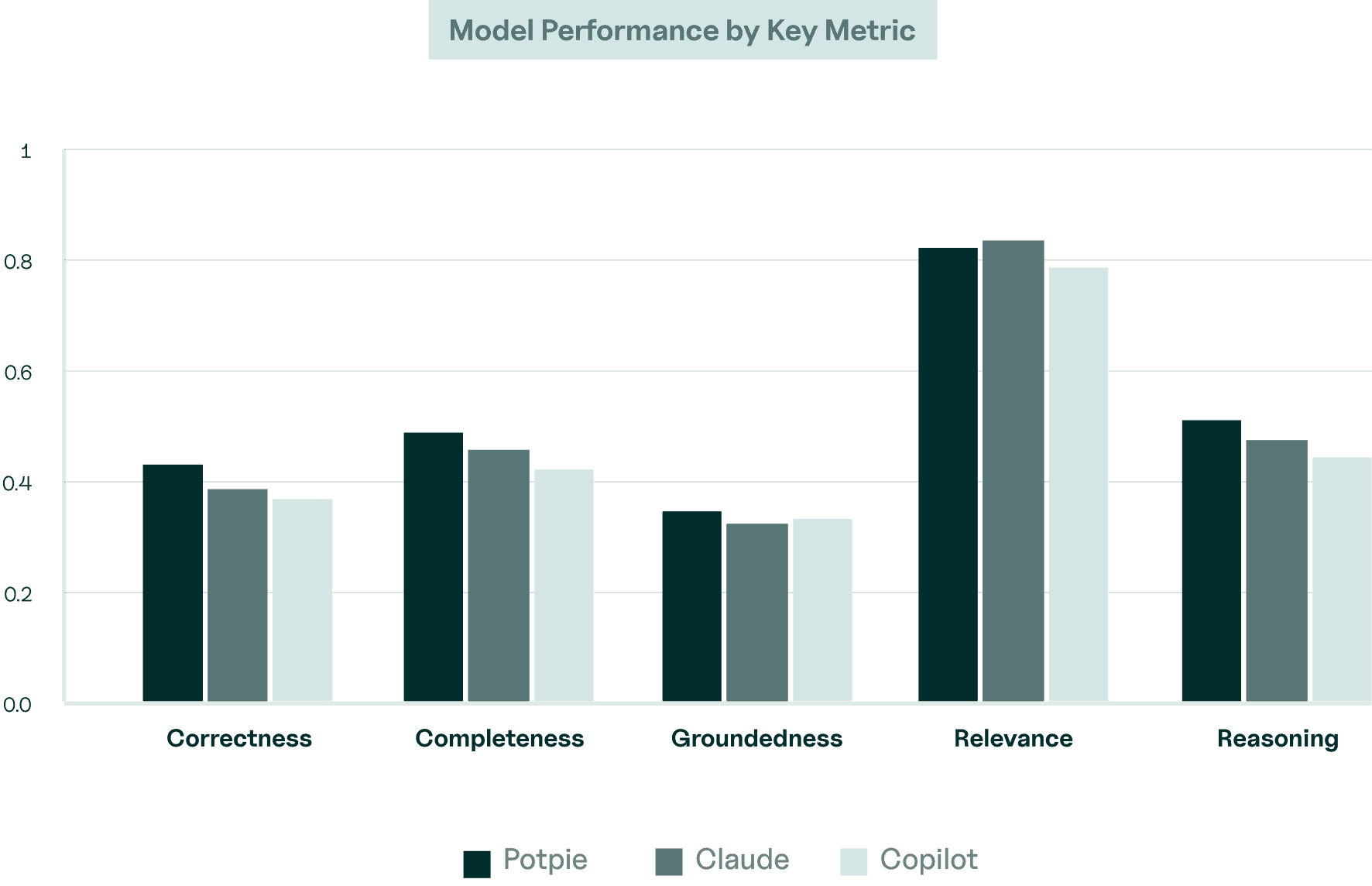

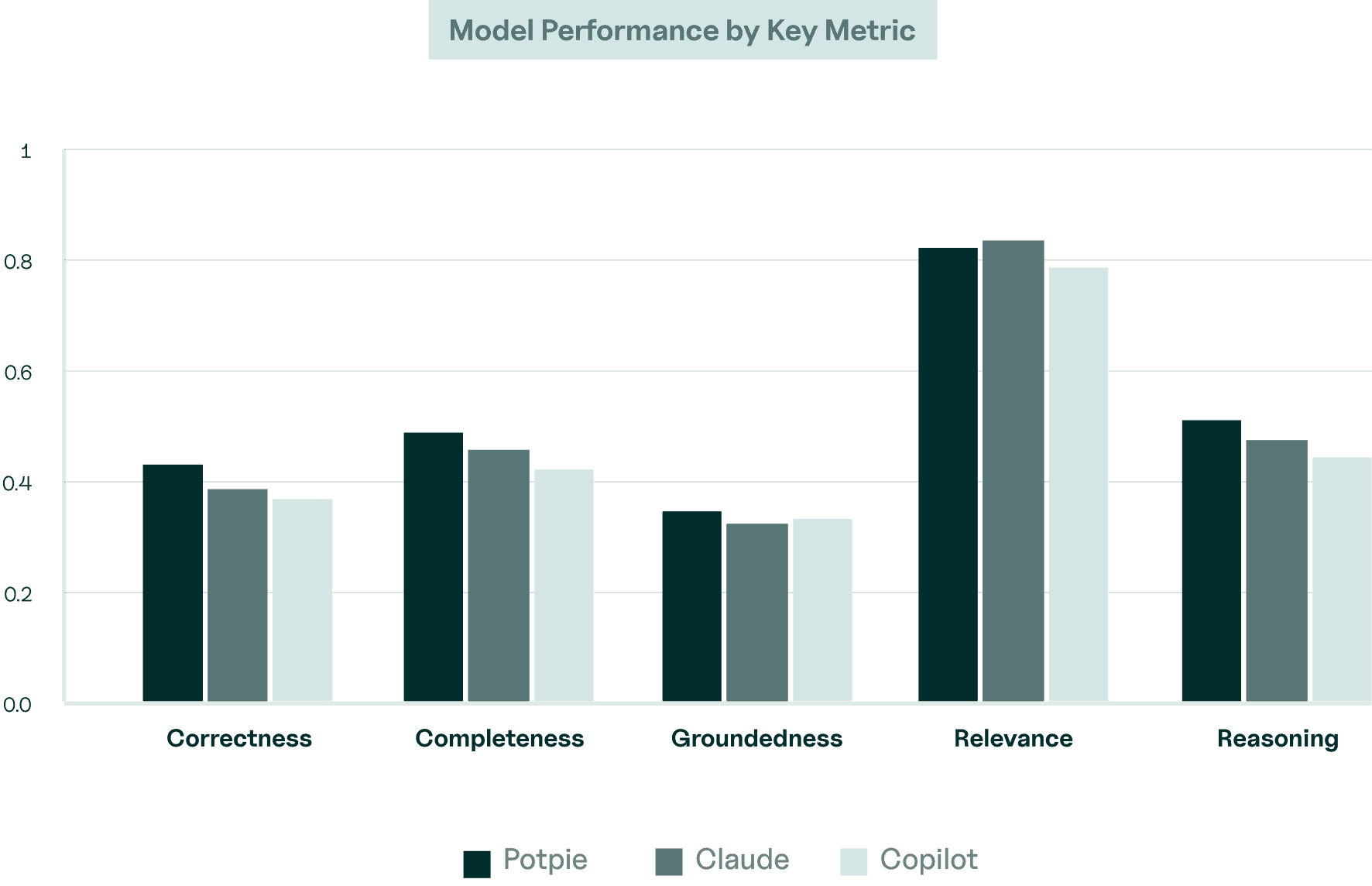

Our evaluation pipeline leverages the G-Eval framework to assess five core parameters.These standardized metrics allow us to continuously benchmark and refine our models against complex, real-world development tasks.

Accuracy of factual information and code references

Correctness

Coverage of relevant aspects, edge cases, and alternative approaches

Completeness

Degree to which answers reference actual code

Groundedness

How well responses directly address the specific question asked

Relevance

Clear logic, deep explanations, and the ability to connect multiple concepts.

Reasoning

REPOSITORY SELECTION

Five diverse, Production-grade open-source repositories

The evaluation process begins with query generation, where targeted questions are created for each repository to examine architectural interactions, state management mechanisms, failure recovery strategies, and specific implementation details such as functions, patterns, and code structure. After generating the queries, reference answers are established by cross-checking multiple sources and manually validating the information to ensure reliable ground truth.

apache/airflow

Distributed workflow orchestration system with complex state management

Large-scale, well-documented editor platform

Backend-as-a-service platform with TypeScript/React patterns

Analysis

We further analyze results by categorizing questions into architecture guarantees, data flow, and error handling. Although QA evaluations can rely on a range of metrics, our analysis prioritizes correctness and completeness. In our benchmarks, Claude and Potpie produced comparable results in terms of correctness, but Potpie scored slightly higher on completeness by consistently covering more relevant details and context in its answers. We used opus 4.6 for all agents in this analysis.

Examples of Potpie Excellence

Question 1

Tinygrad Memory Consistency

How does tinygrad ensure memory consistency and correct gradient aggregation across devices in distributed training?

Potpie : 0.935 | Claude : 0.625 | Copilot : 0.475

Why Potpie does better :

Codebase-Aware Reasoning: Potpie analyzes the actual repository structure, allowing it to reason from real code instead of general knowledge.

Higher Groundedness in Code: Its answers are grounded directly in the repo’s code paths, improving correctness.

Multi-File Context Understanding: Potpie can trace interactions across multiple modules to understand system behavior.

Question 2

Mattermost WebSocket Consistency

How does WebSocket system ensure eventual consistency during network partitions?

Potpie : 0.760 | Claude : 0.130 | Copilot : 0.615

Why Potpie does better :

Deep system architecture: Explained multi-layer consistency (memory, database, network)

Specific recovery patterns: Detailed message buffering, replay queues, and convergence logic

Concrete code references: Cited actual WebSocket handlers and state machine transitions

Edge case handling: Covered partial failures, split-brain scenarios, and reconciliation strategies

Ready to experience engineering without the grind?

Start using Potpie and let agents handle the complexity while your team focuses on impact.

© 2026 Potpie. All rights reserved.

[CODEBASE Q&A AGENT]

Potpie'S METRICS

Benchmarking AI Coding Tools in Production Environments

Tested against real-world queries across five large open-source codebases, Potpie sets a new benchmark in AI-driven code analysis.

Overview

We evaluate multiple AI code understanding agents across a set of curated questions drawn from real-world repositories to highlight practical differences in how they retrieve context, reason across files, and explain code behavior. The goal is to surface how well each agent handles tasks that resemble everyday developer queries.

Each agent is evaluated using default configurations with no additional tuning or repository-specific customization. We measure answer accuracy, reasoning quality, context retrieval, and consistency across queries to reflect how these systems perform in realistic development workflows.

Methodology

For QA benchmark, we selected repositories that represented real-world software complexity and workflows. The chosen repos are very popular open source projects with active development history, meaningful dependency structures, and diverse code patterns so agents must reason across files, modules, and context.

Correctness

Accuracy of factual information and code references

Completeness

Coverage of relevant aspects, edge cases, and alternative approaches

Groundedness

Degree to which answers reference actual code

Relevance

How well responses directly address the specific question asked

Reasoning

Clear logic, deep explanations, and the ability to connect multiple concepts.

Potpie'S METRICS

Potpie'S METRICS

How We

Evaluate Responses

Our evaluation pipeline leverages the G-Eval framework to assess five core parameters.These standardized metrics allow us to continuously benchmark and refine our models against complex, real-world development tasks.

REPOSITORY SELECTION

Five diverse, Production-grade open-source repositories

The evaluation process begins with query generation, where targeted questions are created for each repository to examine architectural interactions, state management mechanisms, failure recovery strategies, and specific implementation details such as functions, patterns, and code structure. After generating the queries, reference answers are established by cross-checking multiple sources and manually validating the information to ensure reliable ground truth.

apache/airflow

Distributed workflow orchestration system with complex state management

Large-scale, well-documented editor platform

Backend-as-a-service platform with TypeScript/React patterns

Analysis

We further analyze results by categorizing questions into architecture guarantees, data flow, and error handling. Although QA evaluations can rely on a range of metrics, our analysis prioritizes correctness and completeness. In our benchmarks, Claude and Potpie produced comparable results in terms of correctness, but Potpie scored slightly higher on completeness by consistently covering more relevant details and context in its answers. We used opus 4.6 for all agents in this analysis.

Examples of Potpie Excellence

Question 1

Tinygrad Memory Consistency

How does tinygrad ensure memory consistency and correct gradient aggregation across devices in distributed training?

Potpie : 0.935 | Claude : 0.625 | Copilot : 0.475

Why Potpie won:

Codebase-Aware Reasoning: Potpie analyzes the actual repository structure, allowing it to reason from real code instead of general knowledge.

Higher Groundedness in Code: Its answers are grounded directly in the repo’s code paths, improving correctness.

Multi-File Context Understanding: Potpie can trace interactions across multiple modules to understand system behavior.

Question 2

Mattermost WebSocket Consistency

How does WebSocket system ensure eventual consistency during network partitions?

Potpie : 0.760 | Claude : 0.130 | Copilot : 0.615

Why Potpie won:

Deep system architecture: Explained multi-layer consistency (memory, database, network)

Specific recovery patterns: Detailed message buffering, replay queues, and convergence logic

Concrete code references: Cited actual WebSocket handlers and state machine transitions

Edge case handling: Covered partial failures, split-brain scenarios, and reconciliation strategies

Ready to experience engineering without the grind?

Start using Potpie and let agents handle the complexity while your team focuses on impact.

© 2026 Potpie. All rights reserved.

[CODEBASE Q&A AGENT]

© 2026 Potpie. All rights reserved.

[CODEBASE Q&A AGENT]

Potpie Specialists

Benchmarking AI Coding Tools in Production Environments

Tested against real-world queries across five large open-source codebases, Potpie sets a new benchmark in AI-driven code analysis.

Overview

We evaluate multiple AI code understanding agents across a set of curated questions drawn from real-world repositories to highlight practical differences in how they retrieve context, reason across files, and explain code behavior. The goal is to surface how well each agent handles tasks that resemble everyday developer queries.

Each agent is evaluated using default configurations with no additional tuning or repository-specific customization. We measure answer accuracy, reasoning quality, context retrieval, and consistency across queries to reflect how these systems perform in realistic development workflows.

Methodology

For QA benchmark, we selected repositories that represented real-world software complexity and workflows. The chosen repos are very popular open source projects with active development history, meaningful dependency structures, and diverse code patterns so agents must reason across files, modules, and context.

Potpie'S METRICS

How We

Evaluate Responses

Our evaluation pipeline leverages the G-Eval framework to assess five core parameters.These standardized metrics allow us to continuously benchmark and refine our models against complex, real-world development tasks.

Correctness

Accuracy of factual information and code references

Completeness

Coverage of relevant aspects, edge cases, and alternative approaches

Groundedness

Degree to which answers reference actual code

Relevance

How well responses directly address the specific question asked

Reasoning

Clear logic, deep explanations, and the ability to connect multiple concepts.

Potpie'S SELECTION

Five diverse, Production-grade open-source repositories

The evaluation process begins with query generation, where targeted questions are created for each repository to examine architectural interactions, state management mechanisms, failure recovery strategies, and specific implementation details such as functions, patterns, and code structure. After generating the queries, reference answers are established by cross-checking multiple sources and manually validating the information to ensure reliable ground truth.

apache/airflow

Distributed workflow orchestration system with complex state management

Large-scale, well-documented editor platform

Backend-as-a-service platform with TypeScript/React patterns

Analysis

We further analyze results by categorizing questions into architecture guarantees, data flow, and error handling. Although QA evaluations can rely on a range of metrics, our analysis prioritizes correctness and completeness. In our benchmarks, Claude and Potpie produced comparable results in terms of correctness, but Potpie scored slightly higher on completeness by consistently covering more relevant details and context in its answers. We used opus 4.6 for all agents in this analysis.

Examples of Potpie Excellence

Question 1

Tinygrad Memory Consistency

How does tinygrad ensure memory consistency and correct gradient aggregation across devices in distributed training?

Potpie : 0.935 | Claude : 0.625 | Copilot : 0.475

Why Potpie won:

Codebase-Aware Reasoning: Potpie analyzes the actual repository structure, allowing it to reason from real code instead of general knowledge.

Higher Groundedness in Code: Its answers are grounded directly in the repo’s code paths, improving correctness.

Multi-File Context Understanding: Potpie can trace interactions across multiple modules to understand system behavior.

Question 2

Mattermost WebSocket Consistency

How does WebSocket system ensure eventual consistency during network partitions?

Potpie : 0.760 | Claude : 0.130 | Copilot : 0.615

Why Potpie won:

Deep system architecture: Explained multi-layer consistency (memory, database, network)

Specific recovery patterns: Detailed message buffering, replay queues, and convergence logic

Concrete code references: Cited actual WebSocket handlers and state machine transitions

Edge case handling: Covered partial failures, split-brain scenarios, and reconciliation strategies

Ready to experience engineering without the grind?

Start using Potpie and let agents handle the complexity while your team focuses on impact.

© 2026 Potpie. All rights reserved.

[CODEBASE Q&A AGENT]

© 2026 Potpie. All rights reserved.

[CODEBASE Q&A AGENT]

Examples of Potpie Excellence

Question 1

Tinygrad Memory Consistency

How does tinygrad ensure memory consistency and correct gradient aggregation across devices in distributed training?

Potpie : 0.935 | Claude : 0.625 | Copilot : 0.475

Why Potpie won:

Codebase-Aware Reasoning: Potpie analyzes the actual repository structure, allowing it to reason from real code instead of general knowledge.

Higher Groundedness in Code: Its answers are grounded directly in the repo’s code paths, improving correctness.

Multi-File Context Understanding: Potpie can trace interactions across multiple modules to understand system behavior.

Question 2

Mattermost WebSocket Consistency

How does WebSocket system ensure eventual consistency during network partitions?

Potpie : 0.760 | Claude : 0.130 | Copilot : 0.615

Why Potpie won:

Deep system architecture: Explained multi-layer consistency (memory, database, network)

Specific recovery patterns: Detailed message buffering, replay queues, and convergence logic

Concrete code references: Cited actual WebSocket handlers and state machine transitions

Edge case handling: Covered partial failures, split-brain scenarios, and reconciliation strategies

Examples of Potpie Excellence