Introduction

Understanding Large codebase requires more than just-in-time search. When an agent needs to answer "what happens when a user clicks checkout?", it needs to trace function calls, understand how data flows, and connect components across hundreds of files. Traditional approaches followed by mainstream tools like standalone grep or embedding based retrieval work for small codebases but fall apart when repositories grow into the millions of lines.

We built a system that constructs a queryable knowledge graph from source code, enabling semantic search, call graph traversal, and impact analysis. Tree-sitter parsing and Neo4j storage are somewhat well understood problems so the main challenge wasn't the graph construction itself, it was doing it reliably for repositories with millions of lines of code, where sequential processing takes days and any failure meant starting over.

This writeup explains the structure of our knowledge graph, how agents use it to understand code, and the distributed architecture that makes it possible to build these graphs at scale.

The Knowledge Graph Structure

The knowledge graph represents code as nodes and relationships in Neo4j. Every source file becomes a FILE node containing the raw text. Classes, interfaces, and functions extracted via tree-sitter queries, become their own nodes, linked to their containing file through CONTAINS edges.

Each node carries metadata: file path, line numbers, source text, and a unique identifier. After initial parsing, an inference pipeline enriches nodes with LLM-generated docstrings that describe what the code does in natural language, and vector embeddings that enable semantic similarity search.

Relationships capture how code elements interact. CALLS edges connect functions to functions they invoke. INHERITS edges link classes to their parents. IMPLEMENTS edges connect classes to interfaces. These relationships enable traversing the codebase structurally, following a function call chain from an API endpoint down to a database query.

The schema supports composite indexes on repo_idand node_id and on name and repo_id, allowing efficient queries scoped to specific repositories. A vector index on the docstring embeddings powers semantic search, using 384-dimensional vectors with cosine similarity.

The dimension of the vector was chosen referencing research that showed that the retrieval accuracy past 384 is not worth the storage cost tradeoff.

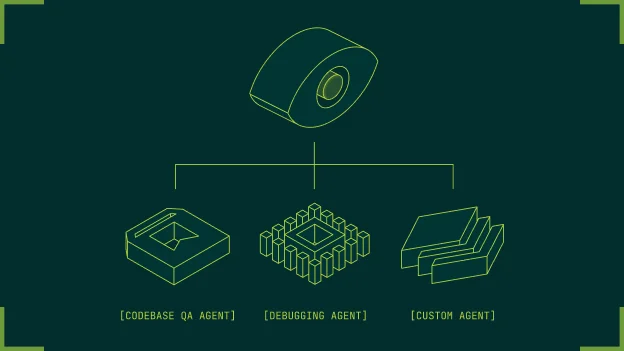

How Agents Use the Knowledge Graph

The knowledge graph backs a suite of tools that agents invoke to understand codebases.

Semantic search converts natural language questions into embeddings and queries the vector index. When a user asks "where do we validate user permissions?", the system finds nodes whose docstrings are semantically similar to the query. Results return with similarity scores, file paths, and the actual code. This also helps with large structured codebases, as an example of a codebase that has modular interfaces, consider a interface that has an ‘add’ method’ and 50 classes implementing it. Where a grep would return 100 “add” methods that would need additional filtration and eat up context, this knowledge graph based approach would directly point to the correct add function based on semantic context. Further it would only return the correct bounded function and not the entire file.

Call graph traversal follows relationships to answer structural questions. The get_node_neighbours tool retrieves direct neighbors i.e. everything a function calls or is called by. The get_code_graph tool builds hierarchical subgraphs up to 10 levels deep (to preserve context, maybe this can be reduced), showing how a component connects to the rest of the system.

Tag-based filtering lets agents find code by role. Nodes can be tagged as API, DATABASE, AUTH, UI_COMPONENT, PRODUCER, CONSUMER, and other categories. This tagging is completed during the inference pipeline. An agent investigating a bug in checkout can retrieve all DATABASE tagged nodes to understand data access patterns.

Change impact analysis combines git diffs with graph traversal. The system identifies which functions changed between branches, then traces up call chains to find entry points like the API endpoints or event handlers that ultimately invoke the changed code. This can power automated PR reviews that explain the blast radius of a change.

A typical agent workflow: receive a question, query the vector index for relevant nodes, retrieve their code, find connected nodes through relationship traversal, scoped grep commands and synthesize an answer grounded in actual source code. The knowledge graph transforms vague questions into precise code locations.

The Challenge

Building this graph for a 35-million-line repository exposed fundamental limitations in our original architecture.

The sequential approach of cloning the repo, traversing directories, parsing each file, building an in-memory graph, and writing to Neo4j couldn't successfully complete. Memory exhausted before parsing finished. When we increased memory limits enough to survive parsing, the Neo4j write phase would timeout. When we batched writes, the overall process took so long that infrastructure failures (worker restarts, network blips) would kill the job before completion.

We needed distributed parsing: split the repository into chunks, parse them in parallel across multiple workers, and merge the results. But distributed systems introduce coordination problems that don't exist in single-threaded code.

Why Celery Chords Failed

The first distributed implementation used Celery's chord primitive. Dispatch thousands of parsing tasks in parallel, ofcourse there was a limit on how many can run at once, then run a callback that aggregates results and writes the final graph. Celery handles the coordination, we just define the tasks.

This broke without any signal, just hung, very difficult to debug. After someone suggested actually looking at the Redis queue for the celery tasks and to dig deeper into each task was I able to understand that it was actually the final aggregation chord that was breaking.

Chords break at scale because the callback receives all task results simultaneously. With 20,000 work units (the number required for a 35-million-line repo), Celery loads 20,000 result objects into memory before invoking the callback. Workers with 8GB of RAM crashed immediately. Increasing memory just delayed the inevitable, at some scale, aggregating results in a single callback will always OOM.

We also discovered message size limits. Celery serializes task arguments through the message broker. Passing 2,000 file paths per task , which is a reasonable work unit size, exceeded broker limits. Tasks were silently failing before they even started.

Coordination Without a Coordinator

The chord pattern failed because it needed one place to collect all results. We needed a different approach to let workers coordinate among themselves without any central aggregator.

Each worker when it finishes parsing writes its results directly to Neo4j. Then it signals completion by updating two values in Redis - a set of completed work unit IDs, and a counter of how many units are done.

The set matters for handling failures like Workers crashing and any Network blips. Celery automatically retries failed tasks, which means the same work unit might complete twice. By checking whether the work unit ID is already in the set before incrementing the counter, we avoid counting it twice. The first completion adds the ID and bumps the counter. Any duplicate just sees the ID already exists and skips the count.

The counter matters for knowing when to proceed. Each worker checks: did my increment bring the count to the total number of work units? If yes, I'm the last one done, so I trigger the next phase. If no, I exit and let others continue.

This creates a system where 20,000 workers can operate completely independently. No coordinator polls for status. No callback waits to receive results. Workers write their data, update two values, and move on. Whoever happens to finish last kicks off finalization.

Work Unit Distribution

How work is divided matters as much as how it's coordinated. One task per file creates millions of queue entries. The overhead of task serialization, dispatch, and acknowledgment dominates actual parsing time. One task per directory creates unpredictable loads, while some directories contain 5,000 files, others can contain three.

We use a bin-packing algorithm that creates balanced work units of 1,500-2,000 files each. The process collects all parseable files grouped by directory, sorts directories by size (largest first), and applies first-fit-decreasing: each directory either fits into an existing bin with remaining capacity, or starts a new bin. Directories larger than the maximum get split into chunks.

The result is predictable parallelism. Every work unit takes roughly the same time to process. No worker sits idle while another churns through a massive directory.

Massive file lists don't travel through Celery, for the same redis broker limits mentioned above. We store them in PostgreSQL and pass only work unit IDs as task arguments. Workers fetch their file lists from the database. This sidesteps message size limits entirely. The coordinator commits the database transaction before dispatching tasks without this, workers might not see their records due to transaction isolation.

Parallel Parsing Within Workers

Each work unit still contains thousands of files. Sequential parsing within a worker wastes potential parallelism. We run a ThreadPoolExecutor with 15 threads inside each Celery task, parsing files concurrently.

Tree-sitter extracts tags from each files and merges the results into a shared NetworkX graph protected by a lock. The lock isn't a bottleneck as parsing takes only a few milliseconds per file.

The graph writes to Neo4j in batches of 1,000 nodes and edges. Batching avoids overwhelming the database with individual transactions while keeping memory usage bounded.

Failure Recovery

Large jobs can often fail. The architecture assumes failure and tracks state at multiple granularities.

Sessions track overall progress all the way from which commit is being parsed, how many work units were triggered, how many were completed, and the current stage (scanning, parsing, resolving references, running inference).

Work units track individual task state: pending, processing, completed, or failed, along with retry counts and error messages.

File state tracks which specific files within a work unit have been parsed.

When a parse request arrives, we check for an incomplete session before starting fresh. If one exists, we query for pending or failed work units, reset the Redis counters, and dispatch only the incomplete units. Workers skip files already present in Neo4j. This way, a crash at hour 10 of a 12-hour job loses roughly 20 minutes of progress rather than 10 hours.

Bootstrap recovery handles the case where session tracking is corrupted but Neo4j contains partial state. We query the graph for existing nodes, compare against the filesystem, and reconstruct which work remains. It's slower than normal resume but provides a fallback when primary tracking fails.

Cross-Reference Resolution

References between code in different directories can't be resolved during parallel parsing as worker A doesn't know what identifiers worker B defined. Each worker tracks two things: the identifiers its files define (function names, class names), and the identifiers its files reference.

After all workers complete, the finalization phase aggregates defines and references across the full repository. For each reference, we look up whether any worker defined that identifier. Matches become CALLS, INHERITS, or IMPLEMENTS edges in the final graph. Unresolved references (calls to external libraries, standard library functions) are ignored.

This two-phase approach of first local parsing then global resolution lets workers operate independently while still capturing cross-directory relationships.

Results

The 35-million-line repository that couldn't complete now finishes in a few hours. Memory per worker stays flat regardless of repository size due to the job distribution and file distribution efforts and any failures only lose the incomplete work units.

The same architecture powers our distributed inference. After parsing completes, we generate docstrings and embeddings for every node using parallel LLM calls. The patterns translate directly: bin-packed work units, Redis coordination, database-stored payloads, checkpoint-based resume.

The knowledge graph transforms how agents interact with large codebases. Instead of searching text, they query structure. Instead of guessing at relationships, they traverse edges. The distributed parsing pipeline makes this possible for codebases of any size, turning what was once a multi-day ordeal into a routine two-hour operation.

Recommended Blogs